|

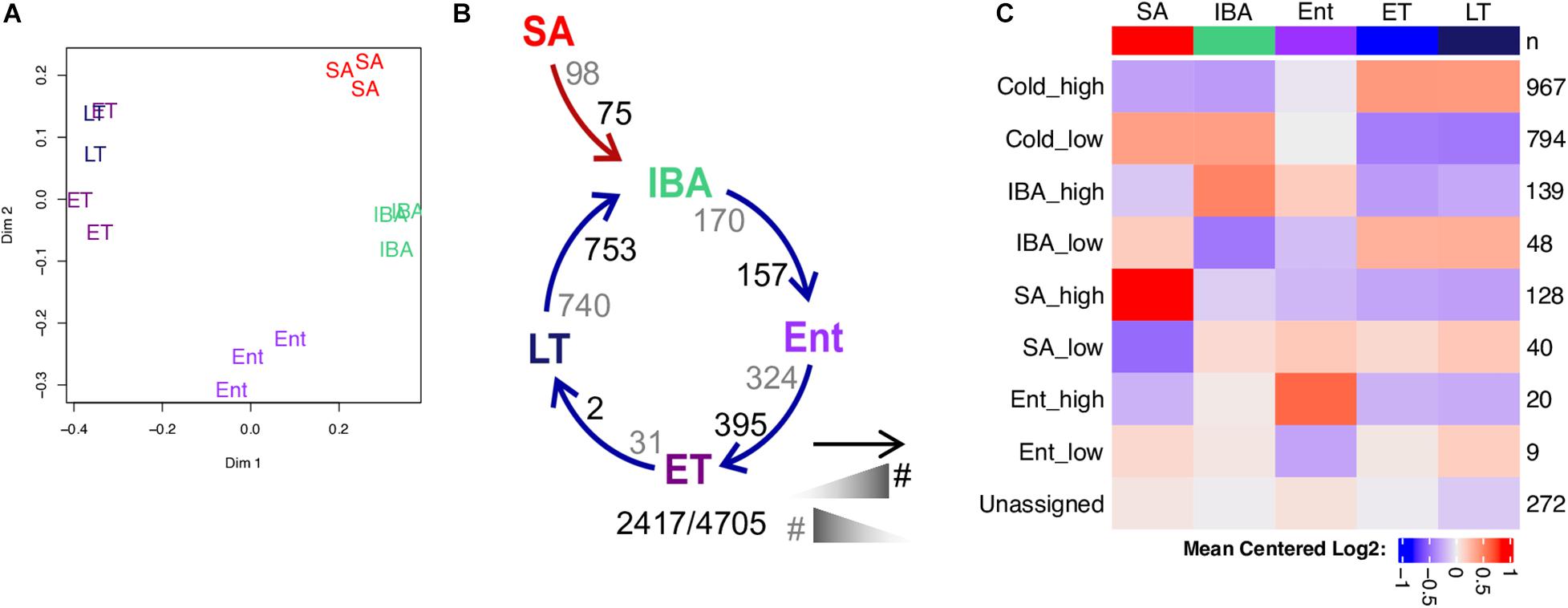

Fast discriminative visual codebooks using randomized clustering forests. Decision Forests for Computer Vision and Medical Image Analysis. In: Proceedings of the IEEE International Conference on Image Processing, 2941–2945, 2016.Ĭriminisi, A. Anatomical structure similarity estimation by random forest. Proceedings of the National Academy of Sciences Vol. Algorithms to automatically quantify the geometric similarity of anatomical surfaces. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 4177–4184, 2014.īoyer, D. Dense non-rigid shape correspondence using random forests. Coarse-to-fine combinatorial matching for dense isometric shape correspondence. In: Proceedings of the IEEE International Conference on Computer Vision, 129–136, 2013.īoscaini, D. Unsupervised random forest manifold alignment for lipreading. Computer Vision and Image Understanding Vol. Matching mixtures of curves for human action recognition. Object segmentation by long term analysis of point trajectories. IEEE Transactions on Pattern Analysis and Machine Intelligence Vol. Motion segmen-tation in the presence of outlying, incomplete, or corrupted trajectories. Extensive experiments demonstrate the effectiveness of the proposed method in affinity estimation in a comparison with the state-of-the-art. The proposed method has been applied to automatic phrase recognition using color and depth videos and point-wise correspondence. The random-forest-based metric with PLS facilitates the establishment of consistent and point-wise correspondences. A pseudo-leaf-splitting (PLS) algorithm is introduced to account for spatial relationships, which regularizes affinity measures and overcomes inconsistent leaf assign-ments. The proposed forest-based metric efficiently estimates affinity by passing down data pairs in the forest using a limited number of decision trees. The binary forest-based metric is extended to continuous metrics by exploiting both the common traversal path and the smallest shared parent node. The criterion used for node splitting during forest construction can handle rank-deficiency when measuring cluster compactness. The post Random Forest in R appeared first on finnstats.This paper presents an unsupervised clustering random-forest-based metric for affinity estimation in large and high-dimensional data. Multi-dimensional Scaling Plot of Proximity Matrixĭimension plot also can create from random forest model. The inference should be, if the petal width is less than 1.5 then higher chances of classifying into Setosa class. Partial Dependence Plot partialPlot(rf, train, Petal.Width, "setosa") Petal.Length is the most important attribute followed by Petal.Width. of nodes for the trees hist(treesize(rf), However, we can tune a number of trees and mtry basis below the function. The model is predicted with high accuracy, with no need for further tuning. Test data accuracy is 90% Error rate of Random Forest plot(rf) Library(caret) Getting Data data NIR] : NIR] : 5.448e-15Ĭlass: setosa Class: versicolor Class: virginicaĭetection Prevalence 0.3409 0.2727 0.3864 Predict new data using majority votes for classification and average for regression based on ntree trees.

For each bootstrap, grow an un-pruned tree by choosing the best split based on a random sample of mtry predictors at each nodeģ. Mtry- variables randomly samples as candidates at each split. The random forest contains two user-friendly parameters ntree and mtry. The random forest can deal with a large number of features and it helps to identify the important attributes. One of the major advantages is its avoids overfitting. Random Forest in R, Random forest developed by an aggregating tree and this can be used for classification and regression.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed